TrorYong OCR: Meet Your Tiny OCR Model

Abstract

Inspired by PARSeq (Bautista and Atienza 2022) and DTrOCR (Fujitake 2024), I design a tiny OCR model called TrorYongOCR for Scene Text Recognition task. A pretrained version is deployed on Huggingface Space here.

Methodology: Use Image Encoding as a Prefilll Prompt

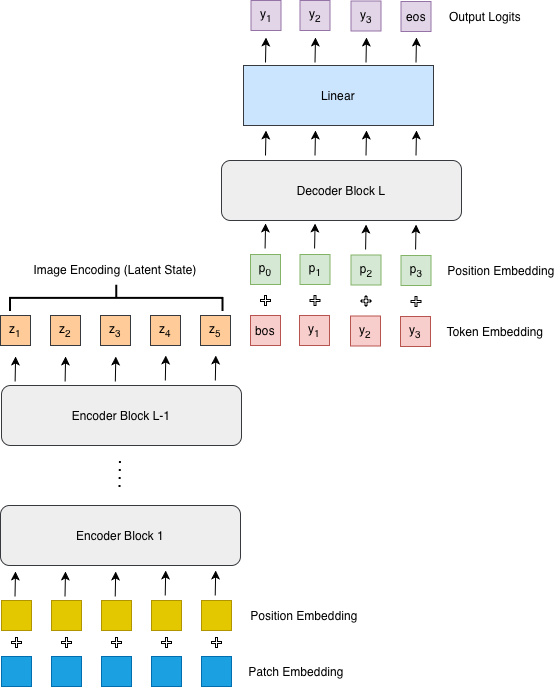

Inspired by PARSeq and DTrOCR, I design TrorYongOCR as the following: given \(L\) transformer blocks

- \(L-1\) are encoding blocks to encode a given image

- the last block is a decoding block without cross-attention mechanism

- for the decoding block,

- the latent state of an image (the output of encoding layers) is concatenated with the input character embedding (token embedding including bos token plus position embedding) to create context vector, i.e. key and value vectors (think of it like a prefill prompt)

- and the input character embedding (token embedding plus position embedding) is used as query vector.

New technologies in Attention mechanism such as Rotary Positional Embedding (RoPE), Sigmoid Linear Unit (SiLU), and Gated Linear Unit (GLU) in MLP of Transformer block are implemented in TrorYongOCR.

TrorYongOCR architecture overview can be found in Figure 1.

Model Configuration

The choice of model configuration can be found below where the image input dimension is \((H, W) = (32, 128)\) where \(H\) and \(W\) are height and width of image respectively, patch size is \((4, 8)\), block size or maximum number of input tokens is \(192\). Transformer configuration is the following: there are \(4\) blocks, each has embedding dimension \(d_{model}=384\) and \(h=6\) heads. In particular, encoding blocks (block \(1\) to \(3\)) have feedforward dimension \(d_{feedforward}=2*d_{model}=768\) and the decoding block has \(d_{feedforward}=4*d_{model}=1546\) (see Table 1).

| Layer | \(d_{model}\) | \(h\) | \(d_{feedforward}\) | Role |

|---|---|---|---|---|

| 1 | 384 | 6 | 768 | Encoder |

| 2 | 384 | 6 | 768 | Encoder |

| 3 | 384 | 6 | 768 | Encoder |

| 4 | 384 | 6 | 1546 | Decoder |

The python code for the configuration is given below.

config = TrorYongConfig(

img_size=(32, 128),

patch_size=(4, 8),

n_channel=3,

vocab_size=len(tokenizer),

block_size=192,

n_layer=4,

n_head=6,

n_embed=384,

dropout=0.1,

bias=True,

)Compared to PARSeq

For PARSeq model which is an encoder-decoder architecture, text decoder uses position embedding as query vector, character embedding (token embedding plus position embedding) as context vector, and the latent state from image encoder as memory for the cross-attention mechanism (see Figure 3 of (Bautista and Atienza 2022)).

Compared to DTrOCR

For DTrOCR which is a decoder-only architecture, the image embedding (patch embedding plus position embedding) is concatenated with input character embedding (a [SEP] token is added at the beginning of input character embedding to indicate sequence separation. [SEP] token is equivalent to bos token in TrorYongOCR), and causal self-attention mechanism is applied to the concatenation from layer to layer to generate text autoregressively (see Figure 2 of (Fujitake 2024)).

Fine-tune Tutorial

You can find my video tutorial on fine-tuning TrorYongOCR in the video below.

Result

I used different screenshots to test the trained model. For characters with fonts present in the training dataset, the trained model is able to recognize fairly well. However, for any characters with fonts absent from the training dataset, the trained model perform poorly. For instance, character in Khmer Muol font can completely break the trained model regardless of image aspect ratio.

Deployment

I deploy the pretrained model (best-model-90epoch.pt) on KrorngAI space here.

Implementation

TrorYongOCR is implemented as a PyPI package and can be installed via

pip install tror-yong-ocrMore detail is given here.

Training Detail

The public pretrained weight of TrorYongOCR can be found here. It is obtained by training on seanghay/khmer-hanuman-100k and SoyVitou/KhmerSynthetic1M datasets. Due to no benchmark datasets for Khmer language, the metric I can share here is that my model achieves a training loss and a validation loss of around 0.2.

Hyperparameters

The hyperparameters regarding the learning rate is given in Table 2. For optimizer, I used ‘Adaptive Momentum with Weight Decay’ whose hyperparameters can be found in Table 3.

| Batch size | Grad Accum Step | \(lr_{max}\) | \(lr_{min}\) | Schedule | Warmup |

|---|---|---|---|---|---|

| 256 | 2 | \(7\times 10^{-3}\) | \(3.5\times 10^{-4}\) | cosine | 10% |

| \(\beta_1\) | \(\beta_2\) | weight decay | \(\varepsilon\) |

|---|---|---|---|

| \(0.9\) | \(0.999\) | \(0.001\) | \(10^{-8}\) |

Weight Initialization

We initialize weights as what SOTA models reguarly do. The code to initialize the weight is given below.

Exceptionally, for position embedding used in the decoding block, I initialized it with \(std=1.0\).

def init_weights(self, module: nn.Module, name: str = '', exclude: Sequence[str] = ('')):

"""Initialize the weights using the typical initialization schemes used in SOTA models."""

if any(map(name.startswith, exclude)):

return

if isinstance(module, nn.Linear):

nn.init.trunc_normal_(module.weight, std=0.02)

if module.bias is not None:

nn.init.zeros_(module.bias)

elif isinstance(module, nn.Embedding):

nn.init.trunc_normal_(module.weight, std=0.02)

if module.padding_idx is not None:

module.weight.data[module.padding_idx].zero_()

elif isinstance(module, nn.Conv2d):

nn.init.kaiming_normal_(module.weight)

if module.bias is not None:

nn.init.zeros_(module.bias)

elif isinstance(module, (nn.LayerNorm, nn.BatchNorm2d, nn.GroupNorm)):

nn.init.ones_(module.weight)

nn.init.zeros_(module.bias)Datasets

Both datasets have an aspect ratio, \(\frac{W}{H}\), varies from \(1\) to \(15\). Since the ratio of my input image dimension is \(\frac{128}{32}=4\), the images with extreme ratio will be transformed radically. Consequently, the characters inside the images are transformed too excessively which itself impacts the features of characters inside the image. This makes it hard for model to capture meaningful features in the transformed images.

khmer-hanuman-100k

This dataset by YatSeanghay contains images with a variety of background colors and character colors. I split this dataset by 9:1 where 90% is used for training set and 10% is used for validation set. I trained TrorYongOCR on this dataset over 80 epochs. This allows me to get a relatively good model.

KhmerSynthetic1M

KhmerSynthetic1M is a dataset by SoyVitou. This dataset contains images in gray monochromatic color palette (black, white, gray, etc.,). The distribution of the number of tokens, i.e. frequency of each number of tokens, is fairly uniform. In particular, the maximum number of tokens is around \(120\). This implies that there are images with aspect ratio largely higher than \(4\). So, I filtered out any images with a ratio larger than \(5\) and used the filtered dataset to train TrorYongOCR. Similarly, \(90\%\) is used for training and 10% for validation. The training carried over \(20\) epochs, and the model achieves a cer of 11.5% on the validation

Combined dataset

Finally, I combined hanuman-100k and KhmerSynthetic1M and trained the model for \(1\) more epoch. (Note that I filter out any images with an aspect ratio larger than 5). All of my the checkpoints can be found here.